Benchmarks

Signal over noise

Benchmarks are the primary way to get real data before getting users. We care about signal, not noise — fewer high-quality metrics that actually reflect agent quality beat comprehensive but misleading suites.

Our evals are public and reproducible. The builds we evaluate are publicly downloadable so anyone can verify results independently. The evaluated codebase is available at AntigmaLabs/ante-preview for independent verification.

Terminal Bench results

- Topped the Terminal Bench 1.0 leaderboard in 2025

- Topped the Terminal Bench 2.0 leaderboard as verified agent and remain best in class among Gemini-based agents (February 2026)

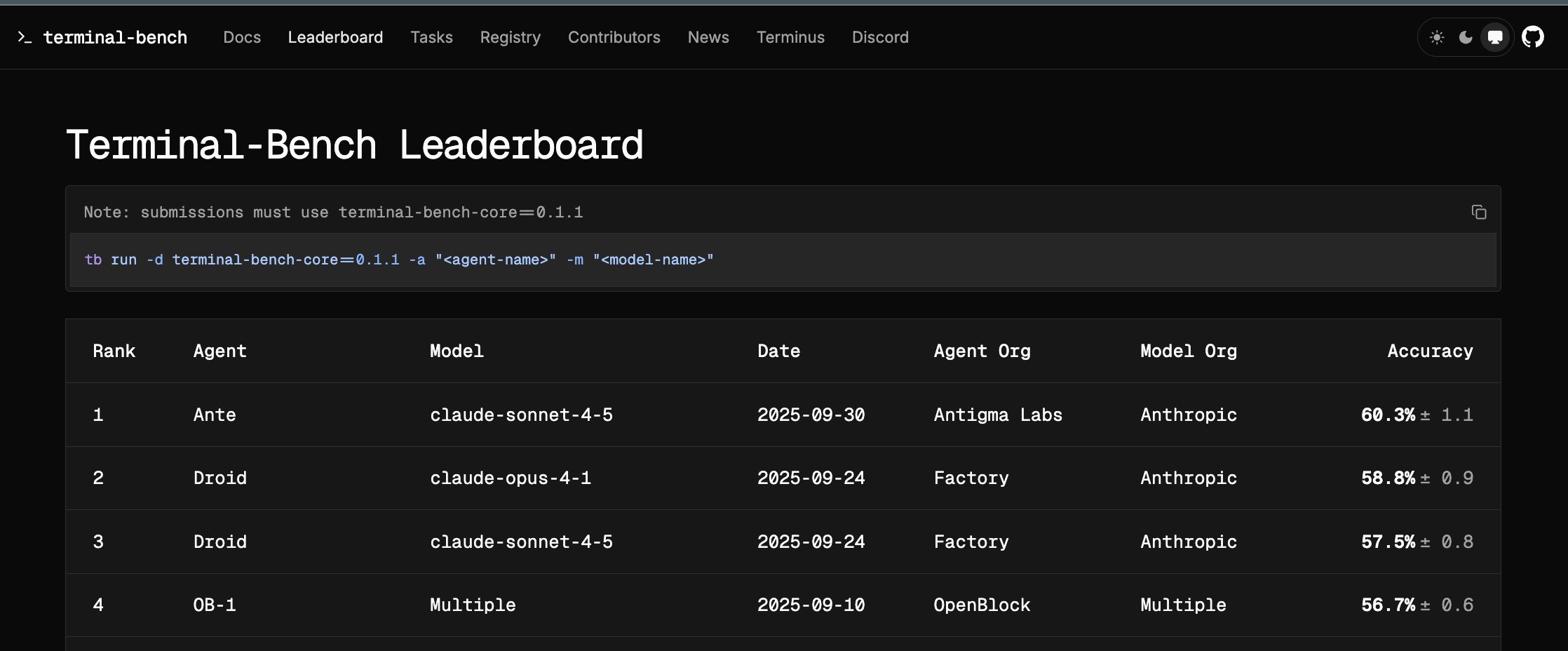

Terminal Bench 1.0. Ante placed first on the public leaderboard on September 30, 2025.

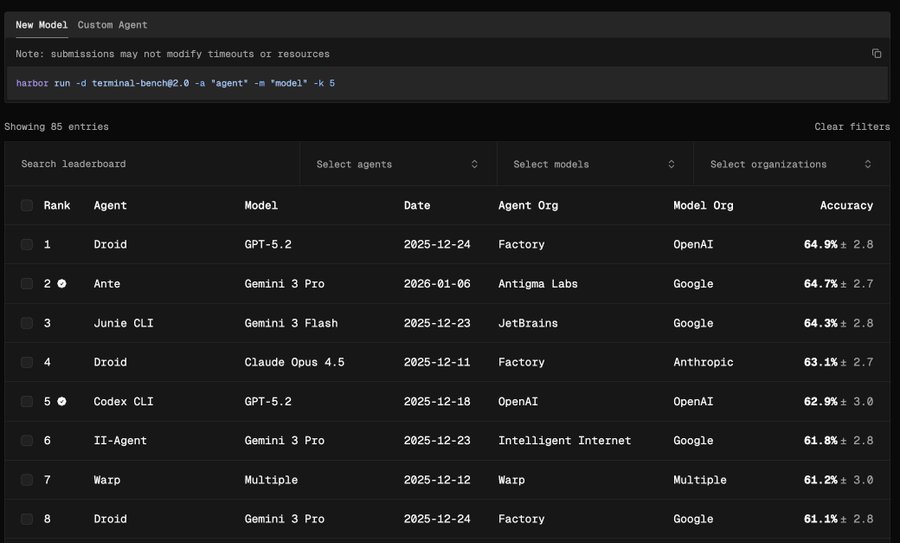

Terminal Bench 2.0. Ante ranked second overall on January 6, 2026 and was the highest-ranked Gemini-based agent in the displayed leaderboard.

We use Terminal Bench and Harbor as our primary external benchmark. The team behind Harbor and Terminal Bench are genuine researchers — rigorous, principled, and motivated by getting it right rather than hype.

Why Terminal Bench:

- Rigorous. Unambiguous task specs, deterministic grading where possible, and isolated execution environments.

- Focused on core capability. Can the agent accomplish real tasks in a real shell? Reading context, reasoning, acting, verifying — the exact loop we are building Ante around.

Evaluation principles

Most of the magic comes from the model — but the agent harness is the critical conduit between human and AI.

We evaluate the agent and how well it channels the model's power — not the model itself.

Improve the harness, not the prompt. When eval scores need to go up, we invest in the agent chassis — the runtime, orchestration, reliability — not in prompt-engineering for a specific benchmark. If a score improves because the system genuinely got better, it stays improved. If it improved because we tuned a prompt, it's fragile. Start early, start simple. A small but honest eval set drawn from actual failures beats a large contrived one. Isolate and reproduce. Every eval run starts clean. When a score drops, we know it reflects a real regression.